AI Is the New Compiler: Why Developers Who Don’t Learn It Will Be Left Behind

Natural language is now a programming interface, and your expertise transfers

By Travis Sparks, with insights from a Principal Software Manager at Microsoft

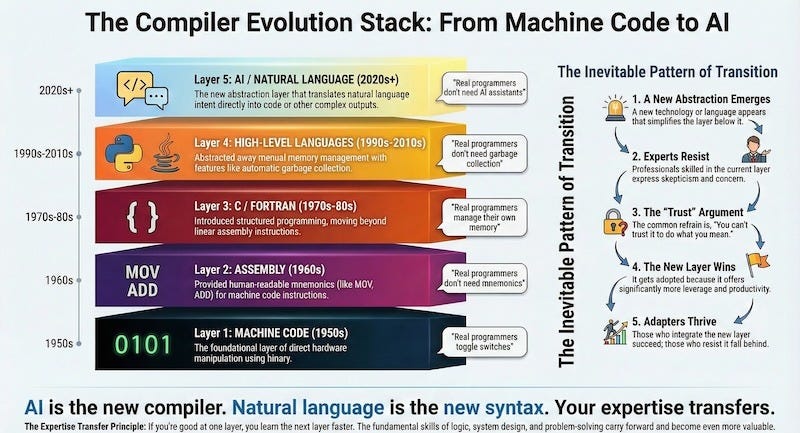

TL;DR: AI isn’t replacing programming. It’s the next layer in the compiler stack: Machine Code → Assembly → C → High-Level Languages → AI. Every layer transition had resisters who said “real programmers don’t need this.” They were proved to be wrong. The skills that made you good at C make you good at Python. The skills that made you good at code make you good at AI. This isn’t about becoming a “prompt engineer.” It’s about recognizing that natural language is now a programming interface, and your expertise transfers.

Hello, Sparklers!

In 1980, a lot of talented programmers thought they didn’t need C.

“Real programmers write assembly,” they said. “How can you trust something that generates machine code for you? You need to understand what the processor is actually doing.”

They were right about understanding the processor. They were wrong about everything else.

The Pattern We Keep Missing

Here’s something fascinating about technology history: we keep making the same mistake.

For a detailed timeline of programming language evolution, see History of Programming Languages on Wikipedia.

Each transition followed the same pattern:

New abstraction layer emerges

Experts in the current layer resist

“You can’t trust it to do what you mean”

New layer wins because it increases leverage

Old experts who adapted thrived; those who didn’t, didn’t

Every single transition had smart people saying the new thing was a crutch. Something “real programmers” didn’t need.

Why This Matters Even If You Don’t Code

The compiler evolution isn’t just a programming story. The same pattern shows up everywhere:

Card catalogs → search engines → AI synthesis

Manual ledgers → spreadsheets → BI tools → AI forecasting

Each step had resisters. Each step, the adapters won.

If you’re a consultant, marketer, healthcare professional, or business owner: the same transition is happening in your world. AI isn’t replacing your expertise. It’s becoming a layer that lets you apply your expertise faster and at greater scale.

Why Experts Resist

There’s a beautiful irony here: the people who understand the most about what’s being abstracted away are often the most skeptical.

When C came along, assembly programmers had legitimate concerns. You couldn’t see what instructions the compiler generated. You couldn’t optimize for specific CPU architectures. You lost control.

Every tool—whether a screwdriver or a car—invented in this world is a layer of abstraction that allows you to leverage it without losing some sort of control. All true. All irrelevant to the larger trend.

Compilers got better. The productivity gain from higher-level abstractions was so massive that the edge cases stopped mattering for most use cases.

The resistance isn’t irrational. Experts have real knowledge that the abstraction layer doesn’t understand. They’ve debugged problems that only exist at their level. They’ve developed intuitions that seem to vanish when you move up.

But that expertise doesn’t vanish. It transforms.

The Expertise Transfer Principle

Here’s the insight most AI skeptics miss: if you’re really good at one layer, you learn the next layer faster. The fundamentals transfer.

When you learn assembly, you’re not just learning opcodes—you’re learning how to think in sequences, debug by understanding state, and reason about systems. Those skills transfer to C, then Python. Each layer, you carry forward what matters.

The same transfer is happening with AI.

When you use AI to write code, proposals, or analysis, you’re not abandoning your expertise. You’re applying it through a new interface. Your understanding of what makes work good, how to structure problems, what to verify—all of that still matters.

AI is a compiler, not a replacement for thinking. A compiler that takes natural language intent as input and produces output you can verify. But working with AI isn’t just speaking to it—AI gets confused without structured, logical communication. Clear thinking up front produces better results.

You’re still working. Just at a higher level of abstraction.

The Real Engineering Challenge Remains

Today, it’s easy to build a tool using AI. But as my colleague points out, the core engineering challenge remains: how do you consistently deliver correct, reliable results at production scale?

Traditional software uses deterministic tests—pass or fail. AI systems are non-deterministic. You can’t validate them with simple true/false checks. You need rubric-based evaluation systems, confidence metrics, and engineering discipline around releases.

This isn’t a programming problem. It’s an engineering problem: designing systems that are reliable, measurable, and safe under uncertainty. AI doesn’t remove the need for engineering judgment, it changes what that judgment focuses on.

What “Learning AI” Actually Means

Here’s where people get confused. They hear “learn AI” and think it means becoming a “prompt engineer” or memorizing magic phrases. None of that is true.

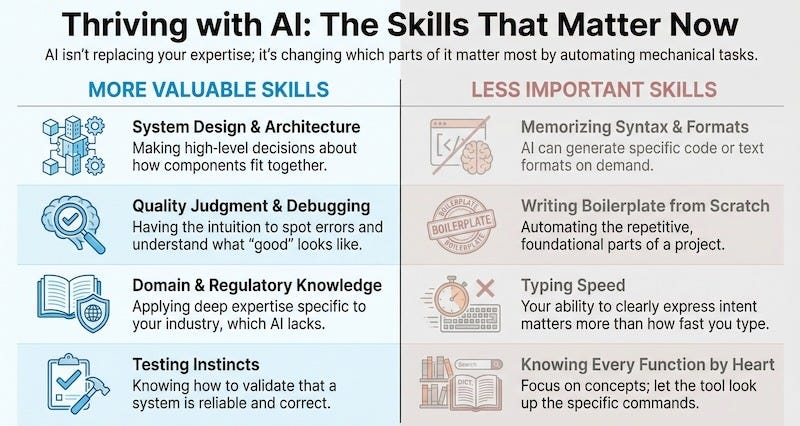

Whether you’re a developer or not, the skills are the same:

Understand the boundaries. AI excels at first drafts, boilerplate, pattern completion, research synthesis. It struggles with novel problems, your specific context, and catching its own mistakes. Knowing this boundary is half the battle.

Verify output. You know your field. You know what “good” looks like. AI gives you a starting point; your expertise tells you whether it’s right. That doesn’t change because AI produced it.

Express intent clearly. Not magic phrases—the same skill you use when writing docs or explaining to colleagues. “Write me a marketing email” gets generic output. “Write a follow-up email to a client who went quiet after our proposal, professional but warm, under 150 words” gets something useful.

For developers specifically: Focus on engineering AI systems, not just calling models. AI systems are non-deterministic and hard to test with pass/fail checks. Traditional tooling breaks down. Focus on evaluation frameworks and system-level discipline.

The Fear, Reframed

Let me address the anxiety directly.

“AI will replace developers” is the wrong frame. Here’s the right one:

Developers who use AI will replace developers who don’t.

This isn’t a threat. It’s the same dynamic from every language transition. Developers who learned C didn’t replace assembly programmers because C was “better.” They replaced them because they could build more in less time.

The same principle applies beyond code. Marketers who use AI to draft faster will outpace those who don’t. Consultants who use AI to research faster will take more clients. Healthcare analysts who use AI to process data faster will catch things others miss.

That’s not “AI replacing you.” That’s you becoming more capable.

Skills That Transfer vs. Skills That Don’t

The pattern holds: foundational thinking matters more, mechanical execution matters less.

What Happens If You Don’t Learn It

In 2010, I watched senior developers dismiss mobile as “not real programming.” Windows desktop experts with decades of experience. They knew COM. They knew Win32. They knew things juniors never would.

By 2015, most had either adapted or left the industry.

Not because they were bad programmers. They bet wrong on what expertise would matter. They thought deep knowledge of the current layer would protect them.

The developers who thrived weren’t the ones who abandoned desktop. They carried their expertise forward: same engineering principles, new platform.

AI is another platform transition. A big one.

How to Start

If you’re a developer: Pick any AI coding assistant—Copilot, Claude, ChatGPT. Use it for real work. Don’t study it; use it. Because the interface is natural language—the same language you already use every day—there’s no need to learn it from a textbook. Learn how to communicate effectively with it in the context of your own problems and domain. Notice when it helps and when it doesn’t. Pay attention to your prompts. Vague gets vague. Clear specs get better code. Learn how to guide it toward useful results. That skill only develops by using it in real scenarios.

If you’re not a developer: Open Claude or ChatGPT. Use it for something real this week: a client email, a report outline, research synthesis. Notice where it saves you time and where you have to fix its output. That’s you learning the boundaries.

If you lead a team: Start a conversation this week. Ask your team: “What would you build if the work took a tenth the time?” Or: “What have we been blocked from doing because we couldn’t scale?” Their answers tell you where AI is already changing what’s possible.

The goal isn’t becoming an AI expert. It’s adding AI to your toolkit like you’ve added tools, frameworks, and platforms throughout your career.

The Opportunity and The Choice

AI is a tool, effectively a new abstraction layer, a “natural-language compiler.” It changes how we build systems, but it does not remove the need to solve engineering problems to productionize them. The methodology of engineering is evolving, not disappearing.

Every abstraction layer made work more accessible and more powerful at the same time. Assembly let more than hardware engineers program. C made systems software possible without hand-coding. Python brought computing to scientists and analysts. AI continues this trend.

For experienced professionals (developers and non-developers alike) this is opportunity. Your judgment, architecture sense, debugging intuition, domain knowledge: all become MORE valuable when the mechanical parts become easier. You’re not competing with AI. You’re being handed a force multiplier.

Every transition had a moment where experts chose: learn the new layer, or bet the old one stays dominant. We know how those bets turned out.

AI is the new compiler. Natural language is the new syntax. Your expertise transfers.

As my colleague puts it: “Everyone should learn to adapt to this new methodology. Not with fear, but with experimentation. There is no alternative path forward.”

We know which bet we’re making.

Travis Sparks is the founder of Sparkry AI, helping professionals navigate the AI transition. This article includes insights from a Principal Software Manager at Microsoft who leads teams building AI-powered engineering systems. This article is part of our ongoing exploration of how AI changes work. Not hype, not fear, just pattern recognition from people who’ve been through a few transitions.

Get more frameworks like this. Subscribe to Sparkry AI for weekly insights on how AI changes work.

Free subscribers get weekly frameworks and practical tips. Paid subscribers get my full Claude Code setup, early access to new tools, and a 1-hour coaching session.

What layer transition are you navigating? Leave a comment.

AI Is Not the New Compiler: A Rebuttal

TL;DR: The "AI is just the next compiler" analogy is rhetorically tidy and technically wrong. Compilers are deterministic, formally specified translators whose failure modes are bounded and debuggable. Large language models are stochastic pattern-matchers whose failure modes are unbounded, silent, and often invisible until production. Treating them as equivalent layers in an abstraction stack flatters the technology while obscuring a growing pile of empirical evidence — from METR's randomized trial, from GitClear's longitudinal code analysis, from Google's own DORA report, from Carnegie Mellon — that AI coding tools, as currently deployed, are not producing the productivity story being sold. The transition is real. The analogy is a sleight of hand. And the "adapt or die" framing is doing more persuasive work than the argument underneath it.